Story

Hi! My name is Eugene Goh and I am a game engine developer. Welcome to my little corner of the Internet. What follows is my journey so far. It may well be as boring as it sounds, so if that does not interest you, feel free to skip on to my downloadable resume or the work examples on the left.

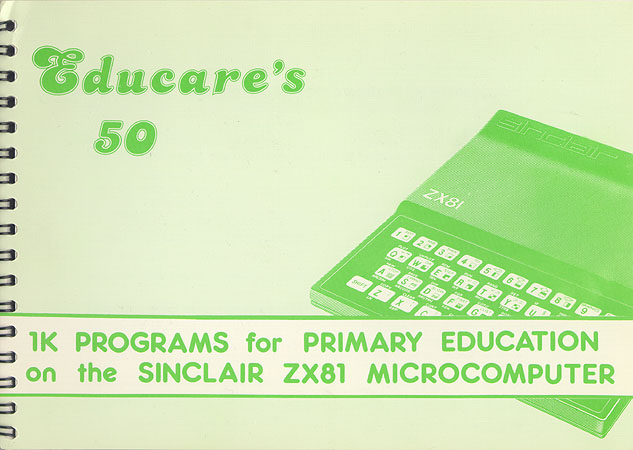

I was exposed at the age of 5 to machines like the ZX80, 81 and Spectrum, as well as the Acorn Atom. My father was keen to get into the new technology, writing a set of 50 educational games that he later published in a book. I became his defacto QA and even picked up the basics of BASIC. This was the foundation that would lay dormant for a decade until I inherited an IBM AT from my Dad’s office. Equipped with a pair of very thick manuals, I wrote my first full-fledged game, a Breakout clone in QBasic.

Perhaps it was this early influence that pushed me in the direction of IT as a profession. My first job was as an application developer, and later moved on to bigger financial systems under the auspices of PwC Consulting and IBM. My love for gaming had not diminish during this time, and I did get deeply engrossed in the MMO trend at the time. It was in one such game, Dark Age of Camelot, that I met the first indie game developer I found in the wild. Not more than a year later, I quit my job and took the plunge with Nexgen Studio.

I spent a year and a half there developing mobile and PC games, rubbing shoulders with VCs and picking up the ropes. After that, I broke out on my own as a freelancer, working on many projects, while simultaneously teaching game development part-time and local tertiary institutions like NYP, Temasek Polytechnic and SAE. It was a neat little ecosystem I built around myself where I’d get wannabe indie developers, hook them up with government grants, and build their teams with interns and fresh graduates I previously taught.

After 6 years in the wild, I wanted to see what true AAA development was like, so I sent out my resume and signed up with the first one that responded, Gameloft’s new Auckland studio. The intention was to stay for 1 year, learn what I can, and then branch out into my own business. There was so much to learn there, and the mentors so great, I ended up staying for 5 years, until the studio was shut down in response to a hostile takeover by Vivendi.

After 6 years in the wild, I wanted to see what true AAA development was like, so I sent out my resume and signed up with the first one that responded, Gameloft’s new Auckland studio. The intention was to stay for 1 year, learn what I can, and then branch out into my own business. There was so much to learn there, and the mentors so great, I ended up staying for 5 years, until the studio was shut down in response to a hostile takeover by Vivendi.

After that stint, I spent some time mucking around in New Zealand on various projects with Rush Digital, Grinding Gear Games and Climax. Eventually, I moved out to the countryside at the base of Mount Taranaki, to pursue my own tech while working part-time for a non-gaming tech company and keeping a lookout for the next great adventure.